SPECsfs2008_nfs.v3 Result

|

Isilon Systems, LLC.

|

:

|

S200-6.9TB-200GB-48GB-10GBE - 140 Nodes

|

|

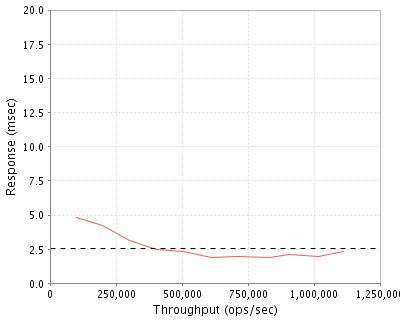

SPECsfs2008_nfs.v3

|

=

|

1112705 Ops/Sec (Overall Response Time = 2.54 msec)

|

Performance

Throughput

(ops/sec)

|

Response

(msec)

|

|

100124

|

4.8

|

|

200493

|

4.2

|

|

301372

|

3.1

|

|

401911

|

2.5

|

|

507219

|

2.3

|

|

611887

|

1.9

|

|

708723

|

2.0

|

|

829790

|

1.9

|

|

906386

|

2.1

|

|

1016284

|

2.0

|

|

1112705

|

2.3

|

|

|

Product and Test Information

|

Tested By

|

Isilon Systems, LLC.

|

|

Product Name

|

S200-6.9TB-200GB-48GB-10GBE - 140 Nodes

|

|

Hardware Available

|

April 2011

|

|

Software Available

|

March 2011

|

|

Date Tested

|

May 2011

|

|

SFS License Number

|

47

|

|

Licensee Locations

|

Hopkinton, MA

|

The Isilon S200, built on Isilon's proven scale-out storage platform, provides enterprises with industry-leading IO/s from a single file system, single volume. The S200 accelerates business and increases speed-to-market by providing scalable, high performance storage for mission critical and highly transactional applications. In addition, the single filesystem, single volume, and linear scalability of the OneFS operating system enables enterprises to scale storage seamlessly with their environment and application while maintaining flat operational expenses. The S200 is based on enterprise-class 2.5" 10,000 RPM Serial Attached SCSI drive technology, 10GbE Ethernet networking, dual quad-core Intel CPUs, a high performance Infiniband back-end, and up to 13.8 TB of globally coherent cache. The S200 scales from as few as 3 nodes to as a high as 144 nodes in a single file system, single volume.

Configuration Bill of Materials

|

Item No

|

Qty

|

Type

|

Vendor

|

Model/Name

|

Description

|

|

1

|

140

|

Storage Node

|

Isilon

|

S200-6.9TB & 200GB SSD, 48GB RAM, 2x10GE SFP+ & 2x1GE

|

S200 6.9TB SAS + SSD Storage node

|

|

2

|

140

|

Software License

|

Isilon

|

OneFS 6.5.1

|

OneFS 6.5.1 License

|

|

3

|

1

|

Infiniband Switch

|

QLogic

|

9120-144

|

144 Port DDR Infiniband Switch

|

Server Software

|

OS Name and Version

|

OneFS 6.5.1

|

|

Other Software

|

N/A

|

|

Filesystem Software

|

OneFS

|

Server Tuning

|

Name

|

Value

|

Description

|

|

sysctl vfs.nfsrv.rpc.maxthreads

|

64

|

allow 64 nfsd threads per node

|

Server Tuning Notes

N/A

Disks and Filesystems

|

Description

|

Number of Disks

|

Usable Size

|

|

300GB SAS 10k RPM Disk Drives

|

3220

|

839.0 TB

|

|

200GB SSD

|

140

|

25.0 TB

|

|

Total

|

3360

|

864.0 TB

|

|

Number of Filesystems

|

1

|

|

Total Exported Capacity

|

864.0 TB

|

|

Filesystem Type

|

IFS

|

|

Filesystem Creation Options

|

Default

|

|

Filesystem Config

|

13+1 Parity Protected

|

|

Fileset Size

|

128889.2 GB

|

Default SSD policy stores one mirror of all metadata on SSD. File data for a given file is striped across 14 nodes.

Network Configuration

|

Item No

|

Network Type

|

Number of Ports Used

|

Notes

|

|

1

|

10GbE with Jumbo Frames

|

140

|

10GbE SFP+ PCIe NIC

|

Network Configuration Notes

The Brocade MLXe-32 configured with 232 wire speed 10GbE ports delivering full utilization of all links for optimum network utilization and instantaneous link or node failover. Non-stop networking is further achieved through hitless failover and software upgrades, and fully redundant hardware components. The configuration provided a single VLAN, with STP disabled and Jumbo Frame support enabled.

Benchmark Network

Each load generator and each S200 storage node was configured with a single 10GbE, 9000 MTU connection to the MLXe-32.

Processing Elements

|

Item No

|

Qty

|

Type

|

Description

|

Processing Function

|

|

1

|

280

|

CPU

|

Intel E5620, Quad-Core CPU, 2.40 GHz

|

Network, NFS, Filesystem, Device Drivers

|

Processing Element Notes

Each storage node has 2 physical processors with 4 processing cores

Memory

|

Description

|

Size in GB

|

Number of Instances

|

Total GB

|

Nonvolatile

|

|

Storage Node System Memory

|

48

|

140

|

6720

|

V

|

|

Storage Node Integrated NVRAM module

|

0.5

|

140

|

70

|

NV

|

|

Grand Total Memory Gigabytes

|

|

|

6790

|

|

Memory Notes

Each storage controller has main memory that is used for the operating system and for caching filesystem data. A separate, integrated battery-backed RAM module is used to provide stable storage for writes that have not yet been written to disk.

Stable Storage

Each storage node is equipped with an nvram journal that stores writes to the local disks.

The nvram is protected by 2 batteries, providing stable storage for more than 72 hours.

In the event of double battery failure the node will no longer write to the local disks, but continues to write to the remaining storage nodes.

System Under Test Configuration Notes

The system under test consisted of 140 S200 storage nodes, 2U each, connected by DDR Infiniband. Each storage node was configured with a single 10GbE network interface connected to a 10GbE switch.

Other System Notes

Test Environment Bill of Materials

|

Item No

|

Qty

|

Vendor

|

Model/Name

|

Description

|

|

1

|

35

|

Dell

|

R610

|

1U Linux client, dual 6-core CPU, 48GB RAM

|

|

2

|

1

|

Brocade

|

MLXe32

|

Brocade NetIron MLXe32 Chassis with 10GbE blades

|

Load Generators

|

LG Type Name

|

LG1

|

|

BOM Item #

|

1

|

|

Processor Name

|

Intel E5645

|

|

Processor Speed

|

2.40 GHz

|

|

Number of Processors (chips)

|

2

|

|

Number of Cores/Chip

|

6

|

|

Memory Size

|

48 GB

|

|

Operating System

|

CentOS release 5.5 kernel 2.6.18-164.11.1.el5

|

|

Network Type

|

Intel 82598EB 10-Gigabit AF

|

Load Generator (LG) Configuration

Benchmark Parameters

|

Network Attached Storage Type

|

NFS V3

|

|

Number of Load Generators

|

35

|

|

Number of Processes per LG

|

64

|

|

Biod Max Read Setting

|

8

|

|

Biod Max Write Setting

|

8

|

|

Block Size

|

AUTO

|

Testbed Configuration

|

LG No

|

LG Type

|

Network

|

Target Filesystems

|

Notes

|

|

1..35

|

LG1

|

1

|

/ifs/data

|

|

Load Generator Configuration Notes

All clients were connected to a single filesystem through all storage nodes

Uniform Access Rule Compliance

Each load-generating client hosted 64 processes. The assignment of processes to network interfaces was done such that they were evenly divided across all network paths to the storage controllers. The filesystem data was striped evenly across all disks and storage nodes.

Other Notes

Config Diagrams

Generated on Mon Jun 27 00:33:42 2011 by SPECsfs2008 HTML Formatter

Copyright © 1997-2008 Standard Performance Evaluation Corporation

First published at SPEC.org on 27-Jun-2011